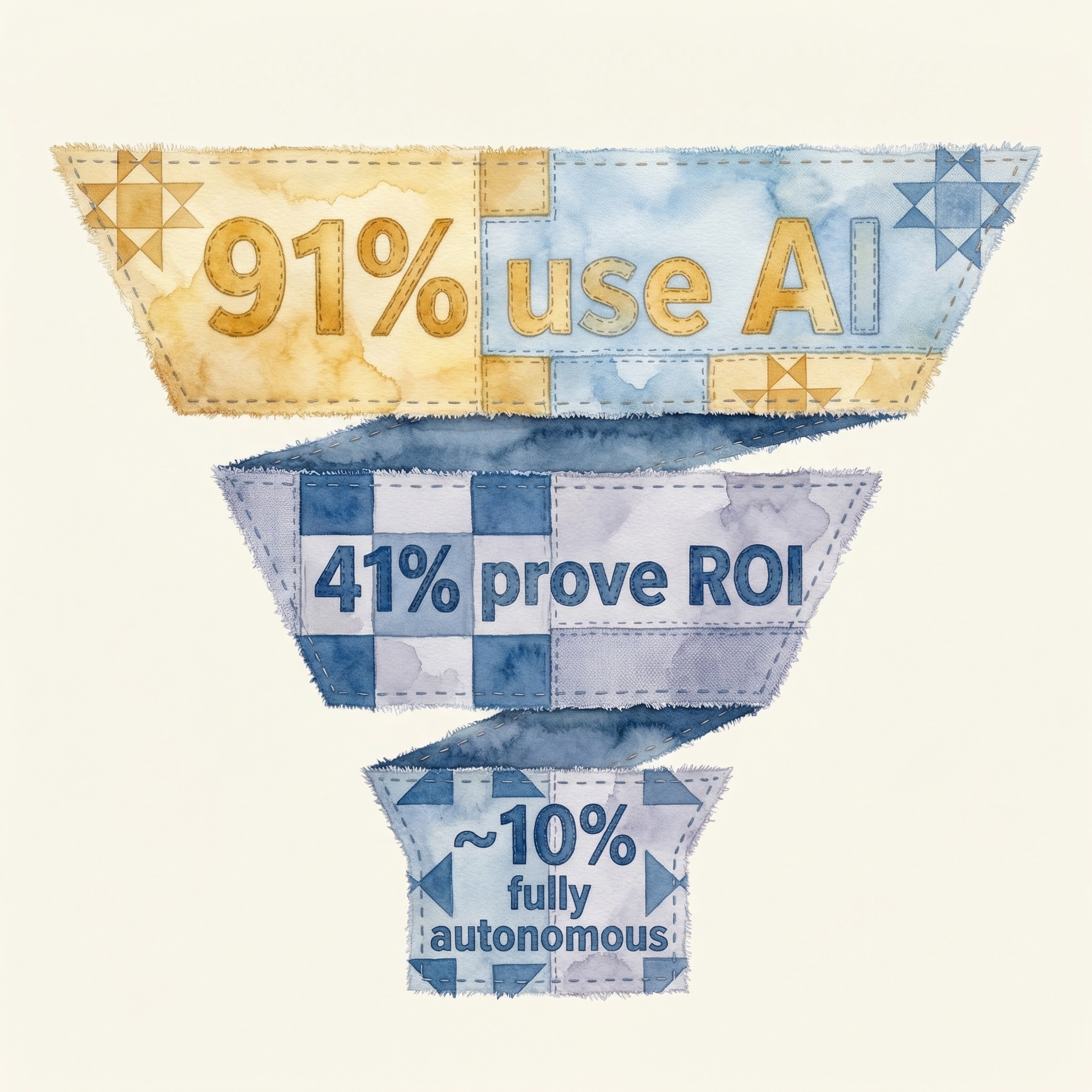

The headline numbers for AI in marketing have never looked better. According to Jasper's State of AI Marketing 2026 report, 91% of marketers now use AI in their workflows — up from 84% a year ago and a nearly unimaginable number just three years back. AI adoption in marketing is, by any statistical measure, complete. The holdouts are rounding errors.

And yet something is plainly wrong. Despite near-universal adoption, only 41% of marketing teams can prove that their AI investments deliver positive ROI — down from 49% who could make that claim last year. Adoption is rising. Proven returns are falling. That is not a healthy trend line.

This report examines the widening gap between AI adoption and AI execution in marketing. We draw on the latest research from Jasper, Gartner, DemandGen, MarTech, Harvard Business Review, and our own work with marketing teams across industries. The thesis is simple: marketing AI has an execution problem. The industry has succeeded at putting AI tools in marketers' hands. It has largely failed at turning those tools into deployed, measurable, revenue-generating workflows.

What follows is a data-driven exploration of why — and what it takes to close the gap.

1. The Adoption Landscape: Everyone Uses AI, Nobody Agrees on What That Means

The 91% adoption figure from Jasper's 2026 survey is striking but misleading in isolation. Dig into how teams actually use AI and the picture fragments quickly. The overwhelming majority of that usage falls into three categories: content drafting, brainstorming, and research. ChatGPT and Claude for writing email copy. Midjourney and DALL-E for ad concepts. Perplexity for competitive research. These are valuable applications, but they share a common trait — they all stop at generation.

Generation is the first ten percent of a campaign. The remaining ninety percent — building the email in your MAP, segmenting the list, configuring the workflow triggers, designing the landing page in your CMS, setting up UTM parameters, QA'ing across devices, scheduling the send, and monitoring delivery — is execution. And almost nobody is using AI for execution.

According to DemandGen's 2026 B2B Marketing Trends report, 65% of marketing teams now have at least one designated AI role — an "AI lead" or "prompt engineer" or "AI marketing manager." These roles are overwhelmingly focused on content generation. They make teams faster at producing draft copy, creative concepts, and strategy documents. They do not make teams faster at deploying finished campaigns.

Key finding: 91% of marketers use AI, but the vast majority use it for content generation only. The execution layer — building, configuring, deploying, and measuring campaigns across marketing platforms — remains almost entirely manual.

This distinction matters because generation without execution creates the illusion of productivity. A team can produce five campaign briefs in a morning with AI assistance and still take three weeks to deploy a single one. The backlog does not shrink — it grows. AI has made it faster to imagine campaigns without making it faster to ship them.

2. The Execution Spectrum: From Assist to Autonomous

Not all AI usage is created equal. To understand where the industry actually stands, it helps to think about AI marketing on a maturity spectrum with four distinct stages.

Stage 1: Generate. AI produces raw content — draft copy, image concepts, subject lines, audience descriptions. A human takes that output and manually builds the campaign in the appropriate tools. This is where approximately 80% of AI marketing usage sits today.

Stage 2: Assist. AI participates in the build process itself — helping configure email templates, suggesting workflow logic, pre-filling form fields in your MAP or CMS. But a human initiates every action, reviews every output, and manually approves every step. According to a MarTech 2026 survey on AI agent deployment, 80.6% of currently deployed marketing AI agents operate in this "assist only" mode.

Stage 3: Execute with approval. AI builds and configures the full campaign — emails, workflows, landing pages, ad groups — and presents the finished result to a human for review. The human approves, requests changes, or rejects. The agent handles the doing; the human handles the deciding. The same MarTech survey found that 37.9% of organizations run agents in "execute with approval" mode — but critically, most of these are limited to a single channel or tool, not end-to-end campaigns.

Stage 4: Autonomous execution. AI handles the full lifecycle — from brief to deployment to optimization — with human oversight at the governance layer rather than the task layer. Humans set strategy, define brand guidelines, approve budgets, and review performance. AI handles everything in between. Virtually no marketing teams operate at this stage today.

"The gap between what AI agents can theoretically do and what organizations actually let them do is the defining tension in enterprise AI adoption. The technology is ahead of the trust." — MarTech 2026 AI Agent Survey

The execution spectrum reveals the core problem. The industry has clustered at Stages 1 and 2 — generation and assist. The economic value of AI in marketing lives at Stages 3 and 4 — execute and autonomous. The gap between where teams are and where value accrues is enormous.

3. Why Marketing AI Stops at Generation: The Last Mile Problem

If AI is capable of more than generation, why has the industry stalled? The answer lies in what Harvard Business Review has called the "last mile problem" — the gap between a working demo and an embedded daily workflow. In marketing, the last mile is not a metaphor. It is a literal catalog of technical, organizational, and trust barriers that prevent AI from moving past content creation into campaign deployment.

The integration barrier

Marketing campaigns span multiple tools — HubSpot or Marketo for email and automation, WordPress or Webflow for landing pages, Google Ads and Meta for paid media, Salesforce for CRM, Figma or Canva for design, Looker or Tableau for reporting. Each tool has its own API, its own data model, its own permissions structure. An AI agent that can write a great email is useless if it cannot also build that email in HubSpot, attach it to a workflow, configure the enrollment triggers, and connect the reporting. Generation requires language model capability. Execution requires deep integration with the marketing technology stack.

Most AI tools have no integration layer at all. They produce output — text, images, code — and leave the human to copy-paste that output into the appropriate platform. This is the marketing last mile problem in its purest form: the AI does the easy part and leaves the hard part to you.

The trust barrier

Marketing is brand-facing. A coding agent that produces buggy code wastes developer time. A marketing agent that sends a poorly formatted email to 50,000 customers damages brand reputation. The stakes of execution errors in marketing are asymmetric — a single bad deployment can undo months of brand building. This asymmetry makes marketing leaders rationally cautious about granting agents execution authority.

The trust barrier is not irrational, but it is often solved incorrectly. The solution is not to keep agents in generation-only mode forever. The solution is to build proper sandbox and approval infrastructure — the ability for agents to execute in a staging environment where humans can review the complete output before it goes live. Very few AI marketing tools provide this capability today.

The workflow fragmentation barrier

Unlike coding — where most work happens inside an IDE with a clear input (code) and output (deployed software) — marketing workflows are deeply fragmented. A single campaign might require work in six to ten different tools. Each tool represents a separate context switch, a separate login, a separate set of conventions. AI agents that operate within a single tool (Jasper for copy, Canva for design, HubSpot for email) cannot orchestrate across the full campaign lifecycle. And tools that try to replace the entire stack with a single platform (the all-in-one approach) invariably lack the depth that teams need in any individual tool.

The result is a structural mismatch. AI agents are good at deep, focused work within a defined context. Marketing campaigns require broad, coordinated work across many contexts. Bridging that mismatch is the central engineering challenge of marketing AI — and most vendors have not even attempted it.

4. The ROI Paradox: More AI Spend, Less Proven Return

Gartner projects $201.9 billion in global agentic AI spending in 2026, with marketing representing one of the largest enterprise spending categories. A separate Gartner forecast indicates that 40% of enterprise applications will have embedded AI agents by the end of 2026. The money is flowing. The infrastructure is being built.

And yet ROI attribution is getting worse, not better. The Jasper report found that only 41% of marketers can prove positive ROI from their AI investments, down from 49% the prior year. How can spending increase while proven returns decrease?

Three dynamics explain the paradox.

First, generation ROI is inherently hard to measure. If AI helps you write an email 40% faster, what is that worth? You saved time, but time savings only translate to revenue if that time is redeployed to higher-value work. Most organizations cannot track that chain of causation. The ROI of generating content faster is real but diffuse and difficult to isolate.

Second, tool proliferation dilutes measurability. The average marketing team now uses multiple AI tools across content, design, analytics, and workflow. Each tool claims credit for efficiency gains. None can attribute to revenue impact in isolation. The more AI tools a team adopts, the harder it becomes to prove the ROI of any single one.

Third, execution-stage AI has clearer ROI but almost nobody uses it. If an AI agent deploys a complete campaign that generates pipeline, the attribution is straightforward — the agent built and deployed the campaign, the campaign generated revenue. Execution-stage AI has clean ROI because it produces the unit of work (a deployed campaign) that connects directly to business outcomes. But as we have established, almost no teams operate at the execution stage.

The ROI paradox, summarized: The form of AI that most teams use (generation) has the hardest-to-measure ROI. The form of AI that has the clearest ROI (execution) is the form that almost nobody uses. The industry is spending more on the hard-to-measure form while the easy-to-measure form remains underdeveloped.

This paradox has real consequences. CMOs under pressure to justify AI budgets face a catch-22: they need ROI data to secure continued investment, but the AI tools available to them produce efficiency gains that resist quantification. The path out of the catch-22 is to move up the execution spectrum — from generation to deployment — where ROI becomes measurable at the campaign level.

5. What Autonomous Execution Actually Requires

If the path forward is to move AI from generation to execution, what does that require technically? Based on our work with marketing teams and the available research, execution-stage AI demands four capabilities that generation-stage AI does not.

Deep API integration across the marketing stack

Agents must be able to read and write to the systems where campaigns live — MAPs, CMSes, ad platforms, CRMs, analytics tools. This is not a matter of connecting to one or two tools. A typical B2B marketing stack includes eight to twelve platforms that participate in campaign execution. The agent needs authenticated, write-level API access to all of them, with an understanding of each platform's data model, field mappings, and configuration conventions. This is engineering-intensive work that cannot be shortcut with browser automation or screen scraping. As we have argued previously, AI agents need APIs, not screenshots.

Sandbox and staging environments

Execution without review is reckless. But review without execution is just generation with extra steps. The resolution is a sandbox model — agents build complete campaigns in a staging environment where every element can be reviewed, tested, and approved before going live. This requires the agent to produce not just content but fully configured campaign artifacts: the email with its template, subject line, preview text, segments, exclusions, workflow triggers, and send schedule. The human reviews the finished campaign, not the draft copy.

Brand governance as code

When agents execute, brand governance cannot rely on human memory or tribal knowledge. Voice guidelines, design systems, compliance rules, and approval hierarchies must be codified — turned into machine-readable constraints that agents can follow deterministically. This means brand guidelines that are not PDF documents but structured rulesets: acceptable color values, font hierarchies, tone parameters, legal disclaimers by market, compliance flags by industry. Teams based in San Francisco or Singapore alike face the same requirement — governance must be explicit, not implicit.

Closed-loop performance feedback

Generation-stage AI is stateless — it produces output without learning from outcomes. Execution-stage AI must be stateful. When an agent deploys a campaign, it should ingest the performance data — open rates, click rates, conversion rates, pipeline generated — and use that data to inform future campaigns. This closed-loop learning is what separates a tool that produces output from a system that improves over time. According to ALM Corp's research, marketing teams that have implemented closed-loop AI execution bring campaigns to market 75% faster than those using AI for generation alone.

"The last-mile problem with AI isn't capability — it's integration. Most AI tools work brilliantly in isolation and fail completely when asked to operate within the messy reality of existing enterprise workflows." — Harvard Business Review

6. The Sameness Problem: When Everyone Generates, Nobody Differentiates

There is an additional consequence of the industry's fixation on generation-stage AI that deserves separate attention. When every marketing team uses the same AI tools to generate content, the output converges. According to Growth Syndicate's 2026 brand voice research, 72% of marketing leaders now say that AI-generated content is actively hurting brand distinction in their market. The same tools, trained on the same data, prompted by marketers reading the same best-practice guides, produce content that sounds the same.

This is not a hypothetical concern — it is a measurable one. B2B email open rates have declined across the industry even as send volumes have increased. Paid media click-through rates are flattening even as ad creative iteration has accelerated. More content, generated faster, is producing diminishing returns because the content lacks differentiation.

The solution is not to stop using AI for generation. The solution is to invest the time savings from AI-generated content into differentiated execution — hyper-personalized campaigns, account-specific landing pages, dynamic content paths that respond to buyer behavior in real time. These execution-heavy approaches are what create brand distinction. But they require the kind of execution-stage AI that most teams do not have.

This is the compounding irony of the current moment. AI generation creates a sameness problem. The solution to the sameness problem is AI execution. But the industry has invested overwhelmingly in generation and underinvested in execution.

7. The Path Forward: Incremental Adoption, One Workflow at a Time

The gap between where marketing AI is (generation) and where it needs to be (execution) is large, but it is not unbridgeable. The organizations making the most progress share a common approach: they are not trying to achieve autonomous execution across their entire marketing operation overnight. They are picking one workflow, automating it end-to-end, proving the ROI, and expanding from there.

The playbook looks like this:

Step 1: Identify your highest-volume, most-repetitive campaign type. For many B2B teams, this is the account-based outreach sequence — personalized emails, matching landing pages, and follow-up workflows for a target account list. For consumer teams, it might be product launch campaigns or promotional sequences. Pick the campaign type where you run the most plays with the most consistent structure.

Step 2: Map the full execution workflow. Document every step from brief to deployment, including every tool touched, every handoff between people, and every QA check performed. Most teams are shocked by how many manual steps exist in a single campaign deployment. The average enterprise campaign involves 35 to 50 discrete steps across four to six platforms.

Step 3: Automate the execution layer, not just the generation layer. Move from "AI writes the copy" to "AI builds and configures the complete campaign." This is where the shift from assist mode to execute mode happens — and it requires an AI system with real integrations into your marketing stack, not just a content generation API.

Step 4: Measure deployment velocity and campaign ROI, not just content output. The metric that matters is not "how many emails did AI draft" but "how many campaigns went live this month and what pipeline did they generate." Shift measurement from generation metrics to execution metrics.

Step 5: Expand to the next workflow. Once one campaign type is running in execute-with-approval mode, take the same approach to the next highest-volume workflow. Compound the gains sequentially.

This incremental approach works because it addresses the trust barrier directly. Marketers see a complete campaign built by an agent, review it, approve it, watch it perform. Each successful cycle builds confidence to grant the agent more responsibility. Trust is earned through demonstrated competence, not demanded through capability claims.

8. Methodology and Sources

This report synthesizes findings from six primary sources published between late 2025 and early 2026:

- Jasper, "The State of AI Marketing 2026" — survey of 1,800+ marketers across industries on AI adoption, usage patterns, and ROI measurement.

- Gartner, "Agentic AI Spending Forecast 2025-2028" — enterprise spending projections for agentic AI across sectors, including marketing.

- MarTech, "2026 AI Agents in Marketing Survey" — practitioner survey on agent deployment modes, trust levels, and implementation status.

- DemandGen, "2026 B2B Marketing Trends Report" — analysis of B2B marketing team structures, AI staffing, and operational maturity.

- Harvard Business Review, "The Last-Mile Problem with AI" — framework for understanding the gap between AI demos and embedded enterprise workflows.

- ALM Corp, "AI Marketing Automation Benchmarks 2026" — performance benchmarks for teams using AI at various maturity stages.

- Growth Syndicate, "Brand Voice in the Age of AI Marketing" — survey of 500+ marketing leaders on brand differentiation concerns related to AI content.

All statistics cited in this report are attributed to their source and linked where available. Where multiple sources report similar findings, we have used the most recent figure. This report reflects data available as of March 2026.